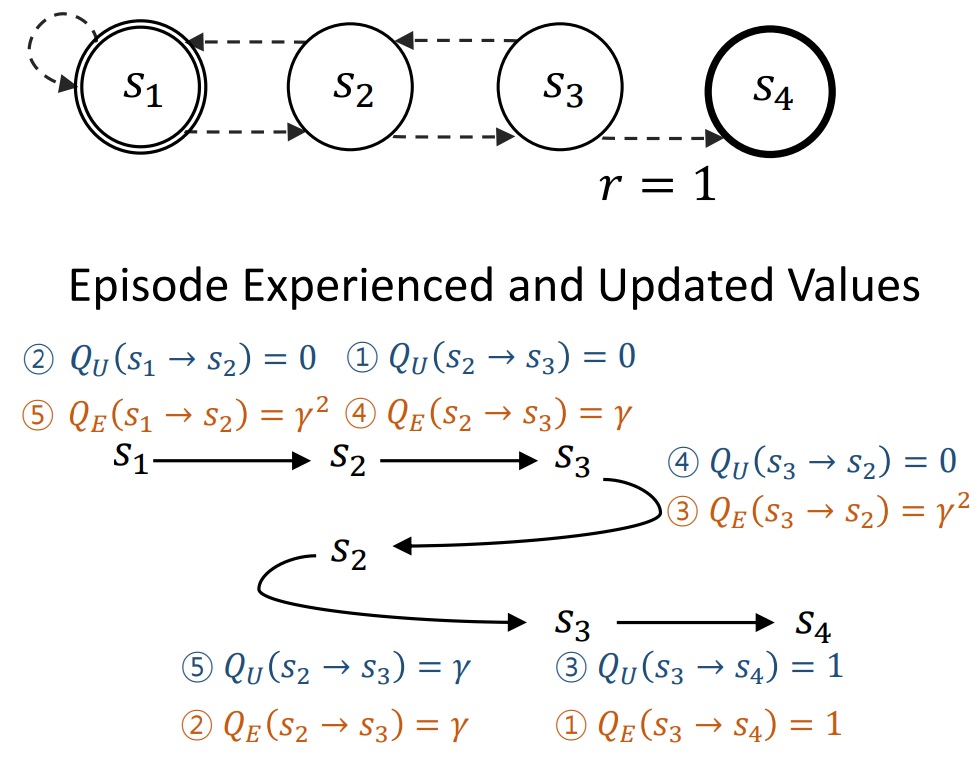

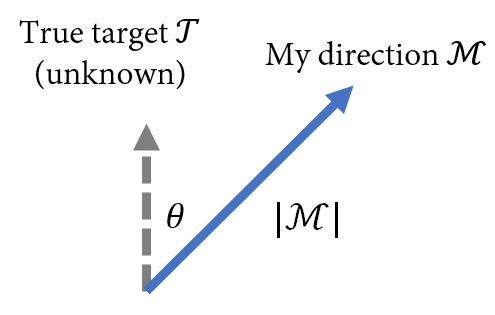

I view life as a meta-reinforcement learning task — reminiscent of MuJoCo's Ant-direction, where an agent learns to walk toward a hidden goal direction it can never observe directly.

Everyone has their own optimal life direction T: unique, often obscured. The objective is to maximize the cumulative reward

r = M · T,

the dot product of M — how we choose to live — and the unseen true direction T. Two things matter: the angle θ between them, and the magnitude |M|.

I was fortunate to have guidance from two advisors who instilled in me the importance of both — minimizing |θ| by choosing the right direction, and maximizing |M| by moving with conviction.